Big Data

Managing the data flood of digitalization

Digitalization leads to more data, higher dependency on data and higher complexity.

With our services for Big Data we help you to flexibly handle the data, manage them and to overcome the challenges of the digital transformation. To minimize the costs we work with flexible and scalable solutions. This means: We start small or do a Proof of Concept first and then we adapt the Big Data infrastructure according to the needs.

We have the right solution for you

Whether you need a Big Data solution to integrate data, to process data or just as data repository. For many years we work with Oracle and we are partner of Cloudera for their Hadoop distribution, Amzon Web Services (AWS) and Talend. This means we can use numerous technologies to find the right solution for you - of course tailored on your requirements and your infrastructure.

Cloudera Hadoop Distribution

The extensive open source ecosystem of Hadoop offers multiple solutions, which permanently progresses thanks to a big and active community. These solutions can be integrated in your infrastructure and are especially flexible if combined with a cloud approach - even without major investments.

With Cloudera, the leading Hadoop distribution, a usefull collection of open source solutions was created. This was supplemented by the helpful tools of Cloudera and bundled very well. Therefore, the efforts and risks for building up a Hadoop solution were reduced considerably. Projects can be executed faster and costs are reduced siginifcantly.

You receive an extensive package of Apache Hadoop with Apache Spark, Apache Sqoop, Apache Kudu, Apache Flume, Apache HBase, Apache Hive, Apache Impala, Apache Kafka, Apache Parquet, Apache Sentry and more. Enterprise ready with a simple subscription concept.

As Partner of Cloudera we have access to best practises, developments and trainings. Hence, many of our employees are certified by Cloudera.

Talend

Talend offers extensive solutions especially for the data integration in big data projects. There are many connectors and jobs for most of the Apache projects with the Hadoop ecosystem and NoSQL databases whether in the cloud or on premises.

Topics like ETL jobs, data connectioin, data management and data peparation are made easy.

As Talend partner we can consult and support you in all aspects of Talend - from selecting the right products, clarifying the correct lisencing up to the imlementation and the support.

Oracle

Besides Hadoop we have many years of extensive experience with traditionals databases but also with special Big Data solutions from Oracle. They offer manifold opportunities - e.g. with Oracle Data Warehouse, Oracle Data Integrator, Oracle Database or Oracle Big Data Appliance, Oracle Big Data SQL and Oracle Big Data Discovery. Together with you, we can develop your concept fitting your needs. We support from the design, the implementation to the optimization and migration as well as supporting your operation.

You don´t only benifit from our Oracle know-how but also from our experience as Cloudera-Partner. For Hadoop Oracle uses the Cloudera Hadoop Distribution (CDH). It is part of the Orcale Big Data Appliance.

Amazon Web Services (AWS)

As Partner of Amazon Web Services we can consult you regading the advantages of Big Data solutions in the cloud. From a Cloud Data Warehouse and Hadoop solutions for the cloud to the use of Amazon solutions like AWS Redshift, AWS QuickSight and AWS IoT.

Futhermore, we support you with Amazon databases like Amazon DynamoDB and Amazon S3.

Microsoft

Some of our employees have multiple years of experience with Microsoft SQL Servers. Based on this knowledge we consult you from the design over implementation to optimizing and migrating of Microsoft SQL Servers. We can support you with Microsoft SQL Server, Microsoft SQL Server Integrations Services to the visualization with PowerBI, regardless of it being just a simple application or a complex data warehouse or big data project.

Open Source databases

Almost on a yearly basis new databases are published, many as open source solutions. Especially NoSQL solutions designed for a special use case are growing in popularity. It is almost impossible to keep track of that manifold number of solutions. We have specialized on the following open source databases to support you:

- MySQL

- mongoDB

- PostgreSQL

- Apache HBase

- MariaDB

- CouchDB

- neo4j

You use something different in your project? Based on their extensive experience our database speciallists can learn new concepts and databases quickly.

Just contact us – we will evaluate if we can support you the right way.

Open Source Software

Especially in the field of Big Data there are besides the multiple open source databases also countless open source tools and applications. We support you with following open software:

- Apache Kafka

- Apache Spark and Spark Streaming

- Apache Impala

- Apache Kudu

- Apache Flume

Tableau

Tableau offers manifold opportunitis to visualize data and analysis results with reports, dashboards or graphics. Tableau is even more than only visiualization of data. You can do data preparation and data quality improvements as well.

Tableau works perfectly together with the Cloudera Hadoop Distribution and relevant tools like Apache Impala, Apache Kudu and Apache Spark. Therefore Tableau is our preferred tool for data analysis and data visualization for customer with a Hadoop infrastructure.

Our Services

We support you regarding all aspects of Big Data. Starting with the optimization and integration of your data sources up to the data integration. Also regarding the data preparatioin, the data storage, the data analysis and visualization of the data.

Analysis

We analyse your data infrastructure whether it is a traditional database, a NoSQL database or an complex Hadoop installation. We consider performance in your current systems, your data management and the complexity of your data structure. As a result we will hand over a detailed report with recommendations to you.

Big Data-Workshops

In our Big Data workshops we present the advantages and disadvantages of differen Big Data solutions and analyse together with you the pros and cons for your company. Our recommendations for a suitable soltuioin with the relevant architector will be summed up in a closing report.

Details and backgrounds we will discuss with you in person.

Database Services - Consulting and Optimizatioin

Also in existing databases data management plays a major role. Besides special Big Data technologies they are a major contributor to the success of your data strategy.

Services regarding databases is part of our portolio for more than 10 years - from desig to operations. Therefore we support you in all aspects of databases; no matter if it is Oracle, Microsoft SQL Server, MongoDB, MySQL, PostgreSQL or Amazon DynamDB, Apache HBase or anything else.

Especially for Oracle and Microsoft SQL Server we build up tremendous expert knowledge. Our experts are speakers in severals international events - e.g. conferences of the German Oracle User Group (DOAG) or the Polnish Oracle User Group (POUG) etc.

Consulting – Use Case, Technology, Implementation

We are happy to consult you with evaluating your requirements, designing your use cases and selecting the right Big Data technologies for it. Together we define which process model and approch for implementation fits best.

Designing your data strategy and your data management

A smart data management and a companywide data strategy assure transparency of your data and the related opportunities - in the whole company. Thogether with you we establish your data strategy and your guidelines for data management.

Planning and technical concept

We are happy to do the planning of your Big Data solution and to support different concepts to fulfill your requirements. Together with you we will evaluate the differen opportunities and develop a realistic concept to give you a clear recommendation.

Proof of Concept

We belive that you don´t always have to start with a big Big Data project. Quite the contrary: We belive in "Think big, start small, learn and grow fast" and start most of the times with a small Proof of Concept (PoC). Thereby we can test the opportunities and evalute the value for your company with limitted efforts.

This keeps your investment low. Thanks to Open Source solutions you don´t have to pay high license fees. If you do the Proof of Concept in the Cloud you also save the costs for hardware etc.

Find and test the right solution with low costs.

Building your Big Data solution

Once the concept and architecture of your Big Data solution is defined we support you in the implementation. You will benefit especially from our experience within the Hadoop ecosystem, in paticular with the Coudera Hadoop Distribution, but also from our knowledge with Big Data and datawarehouse solutions from Oracle, Amazon and Microsoft.

Daten integration, developing jobs and processes

We don´t only master Open Source tools for data integration, connecting data sourcess and for developmen ot integration and data transfer jobs. As Talend Gold Partner we are experts on the Talend product range. With our knowledge and experience with ETL and ELT jobs we can coomprehensively support you - from defining and implementing up to optimizing and maintenance.

Optimizing the data basis of your ETL and Integrations jobs, of your Big Data solution and queries

We assure high performance of your solutions and avoid that your data lake becomes a data pond: Therefore we regularly analys and optimize your data basis as well as the data integratoin, your Big Data solution and the relevant queries.

Support and continuous development

We help you to keep the performance of your solution high and to further develop and expand it according to your needs. Therefore, we support you with the continous maintenance and development of your data warehouse or Big Data soltution.

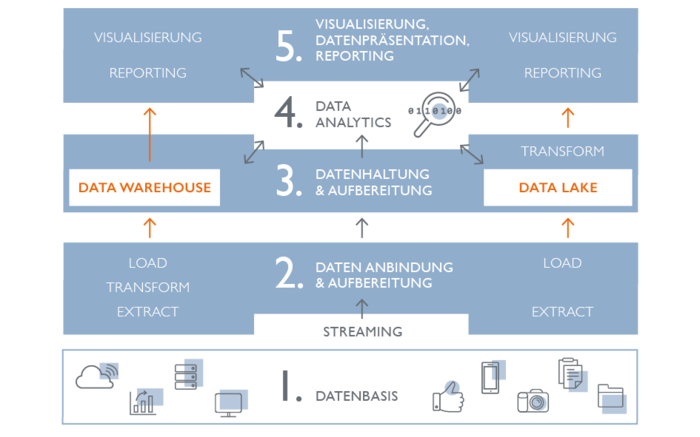

Our view on the world of big data and business intelligence

Big Data is a extremly broad field. In our opinion it starts with the data itself, the different data sources up to the data integration and the data preparation, from data storage to analysis up to the visualization in the form of reports, dashboards and charts. We have experience in all these aspects und with our partnerships we can offer numerous solutions.

data - data basis

A major reason for Big Data solutions is the increasing complexity of the data basis. Besides the traditionals structured data more and more unstructured data must be processed - sometimes in real time. Important driver for this is the devlopment of Internet of Things (IoT), increasing automation and the social media.

Thus the landscape of data sources which need to be integrated in Big Data solutions has changed reasonably. Besides traditionals databases more and more specialized NoSQL databases are used.

We have years of experience in the database consulting, the optimizing and maintenance of databases. Therefore we can support you comprehensively regarding the data basis and data sources.

Regarding traditionals databases our focus is on Oracle, Microsoft SQL Server, MySQL and PostgreSQL. At the same time we are experts on upcoming solutions like MongoDB, neo4j, Amazon S3, Amazon DynamoDB, CouchDB or HBase - in the cloud or on premises.

DAta Connection, DAta integration and preparation

The right connection and integration of your data is as imporant as a proper preparation. Therefore we support you in all three areas.

The data connection could be a interface between different applications, a message broker or a data transfer from several databases into central enterprise data hub. Consequently there are many possibilities. We consult you with identifying the best fit.

Goal of the data preparation is to consolidate the formats - especialle in cases with many different data sources. In many cases it is required due to e.g. data privacy regulations to also document the meta data like data of data capturing, author or storage period.

For the integration and preparation you usually write integration and transformation jobs - so called ETL (Extract, Transform, Load) or ELT (Extract, Load, Transform) jobs. There are several tools for the different platforms, but also platform-independent solutions are available.

We master the common tools for the Hadoop ecosystem, for oracle, Microsoft and Amazon Web Services. Through our partnership with Talend we can also use an extensive platform independent integration tool.

Data storage and data preparation

Whether in a traditional database, in a traditional data warehouse or in a modern Big Data solution: The optimal storage is performant, reliable and secure.

In many cases a traditional database is sufficient. With increasing requirements however, the volume of data and the complexity of the data structure is increasing.

We support you to develop the fitting data strategy and architecture to master your challenges. Thanks to our longtime experience with databases and our partnerships with Cloudera and Amazon Web Services (AWS) we can rely on many different options, to identify the best approach for your requirements:

Technologies we support:

- Cloudera Hadoop Infrastructure, in the cloud or On-Premises

- AWS Big Data solutions

- Oracle Big Data Appliance

- Spark Streaming

- Microsoft SQL Server

- Data warehouse from Oracle or Microsoft

Data Analytics

Data are a critical success factor - if you analyse them. This is the only way to get deeper insights and gernerate usefull information. Data Analytics applications are a meaningful complement to any Big Data soltuion. Therefore many Data Analytics platforms offer also solutions for the visualization of the data and present the analysis reasults in the form of reports, graphics or dashboards.

We support you with our extensive knowledge in the area of Data Analytics. Learn more

Data presentation, visualization and reporting

The way and manner how you present the data and the results of the analysis determines how fast and how easy the employees of your company can evaluate and use them.

Standard products like QlikView, Microsoft Power BI or Tableau are simple to implement and easy to use. Many times you can create helpful overviews, tables, graphics, statistics or analysis. Depending on your requirements it could be useful to integrate the visualization and the reports in an application or develop a new application for it.

No matter which option fits for you we can support you with our extensive experience and knowledge. With open source solutions like JasperReport or Java Frameworks like Primefaces or Oracle APEX - we delvelop the best reports or dashboards for your data. Of course also with responsive design or as native app. That you can use your application also on mobile devices.

Traditional criteria for Big Data

Volume – the amount of data.

Variety – the data complexity of different typs of structured and unstructured data.

Velocity – the speed of data processing.

Veracity – the completeness or correctness of the data.

Why we see data warehouses in the world of Big Data

Maybe you wonder why we have data warehouse solutions in our overview as traditional data warehouse soltions mainly work with structured data. Even though the criteria of variety as in the traditional definition of Big Data solutions is not fulfilled: In our view data warehouses are related to big data solutions nevertheless.

Because modern Big Data solutions like data lakes are often connected to Data Warehouse solutions. They supplement and overlap each other.

Data Warehouses and Data Lakes

Traditional data warehouses had the reputation as beeing structured and controlled but beeing inflexible and of low performance at the same time. Therefore data lakes have been developed to create a flexible and inexpensive infrastructure. The Drawback: If you load a huge amount of data uncontrolled into a Big Data solution you have the risk of creating a big data garbage dump - a good example how close data warehouses is connected to the field of big data.

The Beginning: the traditional data warehouse model

The traditional data warehouse has been the main system in the area of business intelligence (BI) for decades. Structured data, mainly from relational databases, are extracted, processed and loaded in the data warehouse via ETL-Jobs. A limitted amount of users could access mainly commercial data and evalute them through reports - a very structured and controlled but also unflexible process with low performance. Often the needed infrastructure was expensive and caused high efforts for maintenance.

As data warehouses traditionally don´t handle unstructured data and usually don´t get along with requirements for real-time data, they dont´t really belong to the world of Big Data.

Designed as a anti-pattern: Data Lakes

For some time the data lake was considered as quasi anit-pattern to the traditional data warehouse. This trend was surely pushed by the increasing distribution of Hadoop as flexible open source solution which can store complex mass data on cheap standard hardware.

The data lake concept was developed with the goal to create a flexible and inexpensive infrastrutre which can be scaled up by adding standard hardware and which can handle unstructured data.

On this base it was not necessary anymore to select the data. Quickly all kind of data was pumped and saved into these data lakes. You could prepare and evaluate the quality of the data once you start using them. This is how the Extract Load Transform (ELTI) process was established.

Today both concepts are combined

Of course leads a uncontrolled upload of data into a Big Data solution to data garbage. And even with Hadoop unnecessary costs are generated for saving useless data. At the same time also the datawarhouse technologies have evolved remarkably. Therefore both concepts are no anti-patterns anymore.

Rather you try to use each advantages by combining both concepts.

How both concepts complement each other – Use case

There are several use cases which show the value of a combined soltuion with a data warehouse and a Big Data soltution. A common example is the archiving of data from the data warehous in a Big Data solution. Older data from a data warehouse are usually archived - this often happens outside the data warehouse. Therefore the archived data are not available anymore for queries, reports or analysis. If you push the old data in a Hadoop solution for example you also relieve the data warehouse. This improves the performance and reduces costs as storage usually is significantly cheaper with Hadoop than within a data warehouse.

Furthermore modern business intelligence and data analytics solutions still cann access the data in the Hadoop solution. Therefore the data are still available for request, queries, evaluations, reports etc.

the suitable solution for data integration - ETL vs. ELT

Should you prepare the data bevor you upload them in your target system or later once you process these data? ETL and ELT descirbe the sequence of the differen process steps in handling the data. Both ways have advantages and disadvantages. We support you to identify the right process for your requirements.

ETL

The traditionals sequence which is used in most of the solutions. The data is extracted (E - Extract) out of the data source, the data is processed and transformed (T - Transform) and finally loaded (L - Load) in the target system.

ELT

A sequence which is often usefull in a very dynamic environment and in connection with Big Data soltuions. The data are extracted (E - Extract) out of the data source and directly loaded (L - Load) into the Big Data solution only once the data are used they get prepared and transformed (T - Transform).

Our offering for data integration

Besides several data integration tools from manufacturers like Oracle and Mircosoft we also use independent open source solutions, especially Talend.

Talend is the leading platform for data integration and preparation - independent if the data are locaed in an datawarehouse from IBM or Oracle, in a Hadoop cluster or in separat databases. Thanks to more than 900 connectores your benifit from a fast and simple data integration with the necessary data preparation and quality assurance.

As Talend Gold Partner we are not limited to consult and support your regarding the different Talend products. We can offer you the products and services along the whole life cycle. From first presentation over the implementation up to the support and maintenance.

Our answers for Big data questions

Why Big Data solutions are usefull for IoT-Projects

Internet of Thins (IoT) referes to the connection of devices, machines and sensores. Exactly with this connection the data traffic is inreased remarkably. With it huge number of unstructured data is created. Logfiles, communication protokolls, sensor data or geo-information should be received, processed and analysed in almost real-time. This is almost impossible with traditional database systems. Therefore you must consider Big Data solutions for any of your IoT-Projects. We are happy to consult you about the possibilities.

Big Data for medium-sized companies

Big Data solutions increase efficiency help to bind customer and therefore to increase revenue and success sustainably - especially within small- and medium-sized enterprises (SME). They are not a privilege of large corprations anymore: With the maifold open source solutions combined with the oportunities of the cloud, Big Data solutions can be realized even without big budgets and investments. We support you to identify the right architecture to use the sucess factors.

Why data protection, It-Security and a professional data management is so important for Big Data solutions

Storage time, anonymization , Anonymisierung, regulated data protection measures and access rights: A thoughtfully choosen data strategy is especially important for Big Data solutions. The multiple chances of increasing data lead to increasing responsibility. The requirements for data protection grow as much as the penalties. Scandals of stolen data damage the image of your company.

As Cloudera Partner we can offer you, especially within the Hadoop environment, extensive opportunities.

We develop a suitable data strategy together with you, consult you regarding security matter of your database and Big Data solution. Of cource we also show you the possibilities of a data protection compliant solution.

Data Agility

A agile and flexbile handling of data is getting more and more important for the success of your company - however is also increases the challenges for your IT department: data get increasingly complex, often a quasi real-time is required - as well as the democratization of the data through departmental boundaries. Eventually you can make better decisions if everyone has the insights of the information. Of course the access to the data should be organized in a way that also Non-IT professionals can create requests and create analysis (Self-Service-BI)

With these challanges traditionals database structures often reach their limitations. Flexible and scalable Big Data solutions are demanded as well as smart data integration and data processing or a strategic data management.